As the winter months come to an end, many gardeners start to wonder when they should begin tending to their plants. While some may assume that gardening tasks should only be carried out during spring and summer, there are actually several reasons why you should consider tending your garden plants from January onwards.

Italian gardens are renowned for their beauty, elegance, and historical significance. These well-manicured landscapes have been an integral part of Italy’s cultural heritage for centuries. They showcase the country’s rich history, architecture, and horticultural expertise. Exploring these gardens provides visitors with a unique opportunity to immerse themselves in the beauty and tranquility that Italy has to offer.

In today’s fast-paced world, ensuring the security of your apartment has become more crucial than ever before. With advancements in technology, there are now various types of alarms available that can help you protect your living space effectively.

When it comes to building or renovating your home, choosing the right materials is crucial. Not only do you want them to be durable and aesthetically pleasing, but also environmentally friendly and cost-effective. Fortunately, advancements in technology have led to the development of innovative building materials that meet these criteria.

Drought and cold weather can have significant consequences on the crayfish harvest in Louisiana, impacting both the supply and quality of this valuable seafood. As one of the largest producers of crayfish in the United States, Louisiana plays a crucial role in meeting domestic demand and supporting local economies. Understanding how drought and cold affect this industry is essential for…

Living a more sustainable and eco-friendly lifestyle doesn’t have to be expensive or difficult. There are many simple changes you can make in your home that will not only benefit the environment but also save you money in the long run.

Home improvement projects are exciting opportunities to enhance your living space and increase the value of your property. However, amidst the excitement, homeowners must be vigilant about potential scams that could leave them with shoddy workmanship or empty pockets.

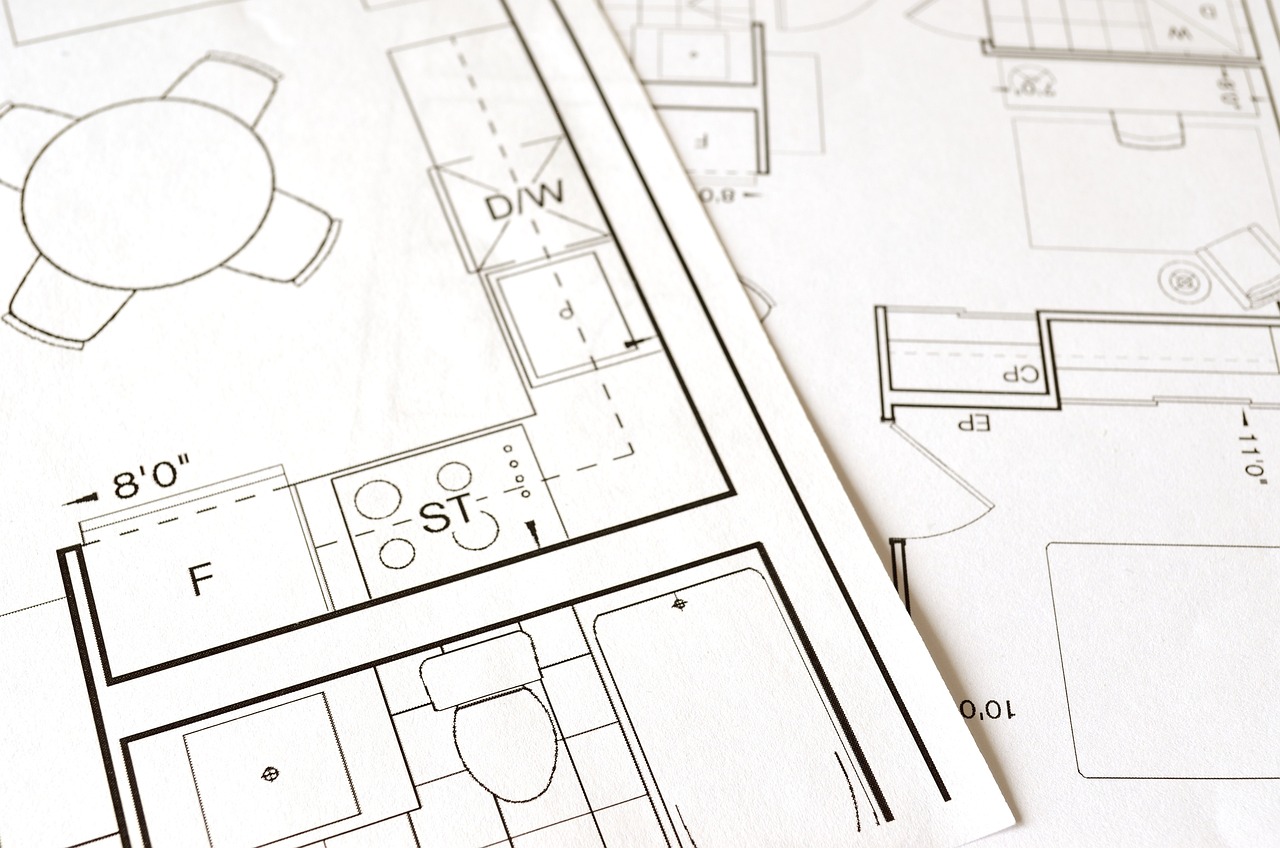

Renovating your home can be an exciting and rewarding experience. Whether you’re planning to update a single room or give your entire house a makeover, it’s important to be well-prepared before diving into any renovation project.

An open-plan living room is a popular choice for modern homes, as it offers a seamless flow between different areas of the house. However, creating an elegant and practical open-plan living room requires careful planning and design.

A small kitchen can present unique challenges when it comes to design and decor. However, with the right ideas and creativity, you can transform your tiny kitchen into a functional and stylish space.